600 Watt Max 100w Nom 6" X 9" 3-way Coaxial 5-way 5 Voies Car Audio Speaker 6x9 Inch Coaxial Speaker For Pioneer Ts-a6995r - Buy Coaxial Speaker,6x9 Inch Coaxial Speaker,Car Audio Speaker

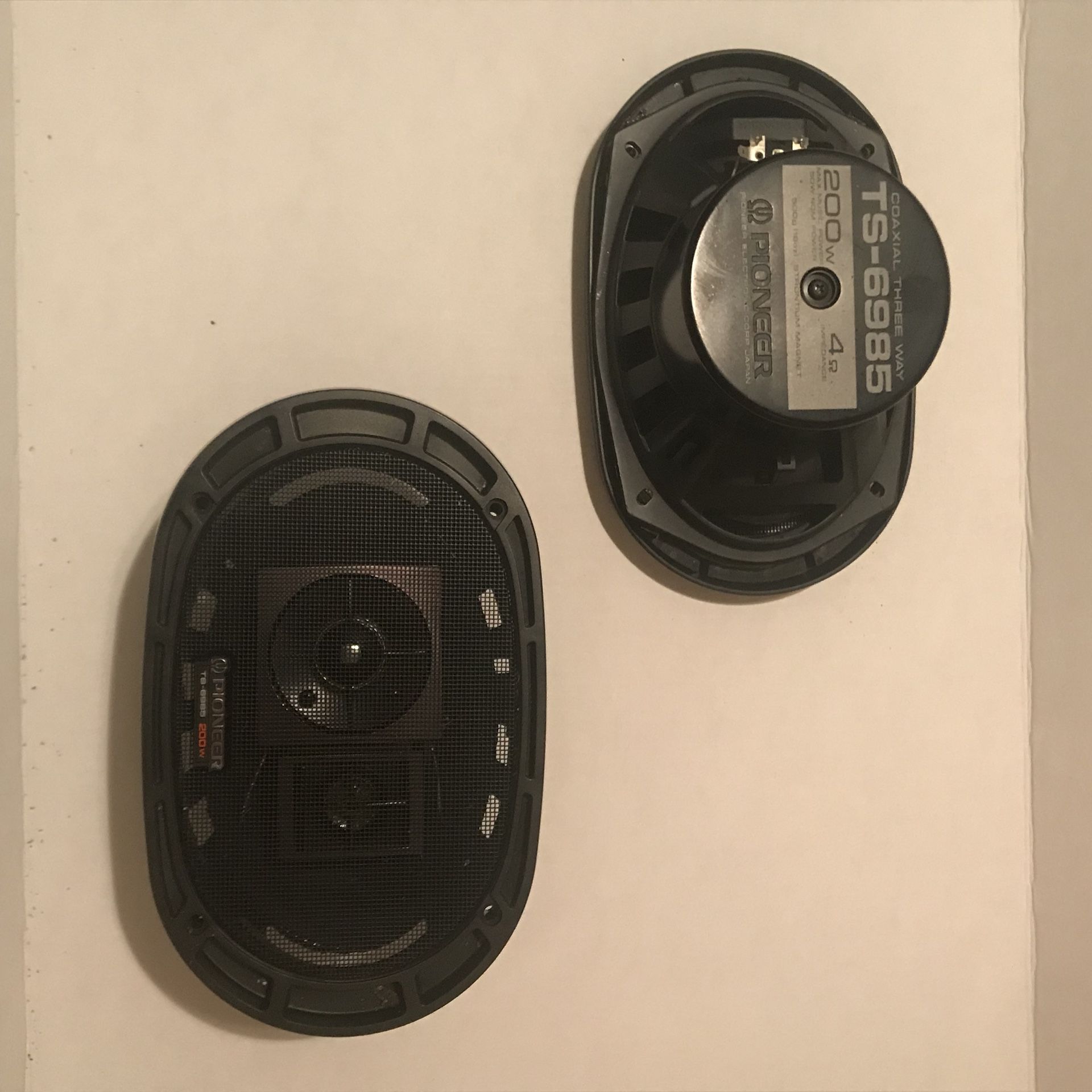

Speaker / Coaxial / PİONEER TS-6985 TAMİRSİZ ORJ.ALYAN SEKMANLARI MEVCUT at sahibinden.com - 918632341

2pcs 6x9 Inch 1000W 12V 5 Way Car Coaxial Auto Music Stereo Full Range Frequency Hifi Speakers Non destructive Installation|Coaxial speakers| - AliExpress